Utilities are pieces of software which perform a single, specific task in a computer system and usually do so in the background with little or no user interaction. Utilities have been around since the dawn of computing and basically fall into the category of “useful little programs.” We usually use them to do simple, repetitive tasks or to perform some kind of automation such as regularly backing up a folder on a computer to the cloud.

In this section (click to jump)

The Purpose of Utility Software

There are countless types and examples of utility software, but for the purpose of the exam you only need to be aware of three – Encryption, defragmentation and compression. The exam ready definition of a utility is:

Utility – A program or piece of software with one specific purpose, usually related to the upkeep or maintenance of a system.

Some common examples of utility Software that are widely used are:

- Encryption

- Defragmentation

- Compression

- Anti-Virus

- Disk Analysis/repair

- Auto-Update

- Firewall

However, remember that you only need to know about the following three in any detail for your exam. It won’t hurt to know about others, but you won’t be expected to know in detail about their functionality or how they work.

Encryption software

As you should know from 1.4.2 – Identifying and Preventing Vulnerabilities, encryption is the encoding or scrambling of data to ensure it cannot be read/understood if it is intercepted by a third party. As far as encryption software goes, there really is very little that you need to know for the exam beyond the definition of encryption and how public/private key encryption works.

An encryption utility would be used to encrypt or decrypt files either automatically or on demand. For example, files may be automatically encrypted when they are saved or before they are sent across a network. If they are accessed by anyone other than the intended recipient, they should be unreadable.

Encryption usually works on an asymmetric key system – Public keys are used to encrypt files, but these keys cannot be used to decrypt the data. Only the private key (which is different to the public key) can be used to decrypt the data. For detail on this, you should refer to 1.4.2 – Identifying and Preventing Vulnerabilities.

Defragmentation software

Before we even look at what defragmentation is, it is essential to understand this one, simple fact:

Defragmentation applies only to hard disk drives. Solid State Drives (SSD’s) store data in a different way and should never be defragmented!

Defragmentation only applies to magnetic secondary storage (hard disk drives) using certain file systems – usually those on Windows based systems.

Defragmentation is putting fragments of files together to make them contiguous (together in one continuous block on a drive) and moving them all to the start of the disk.

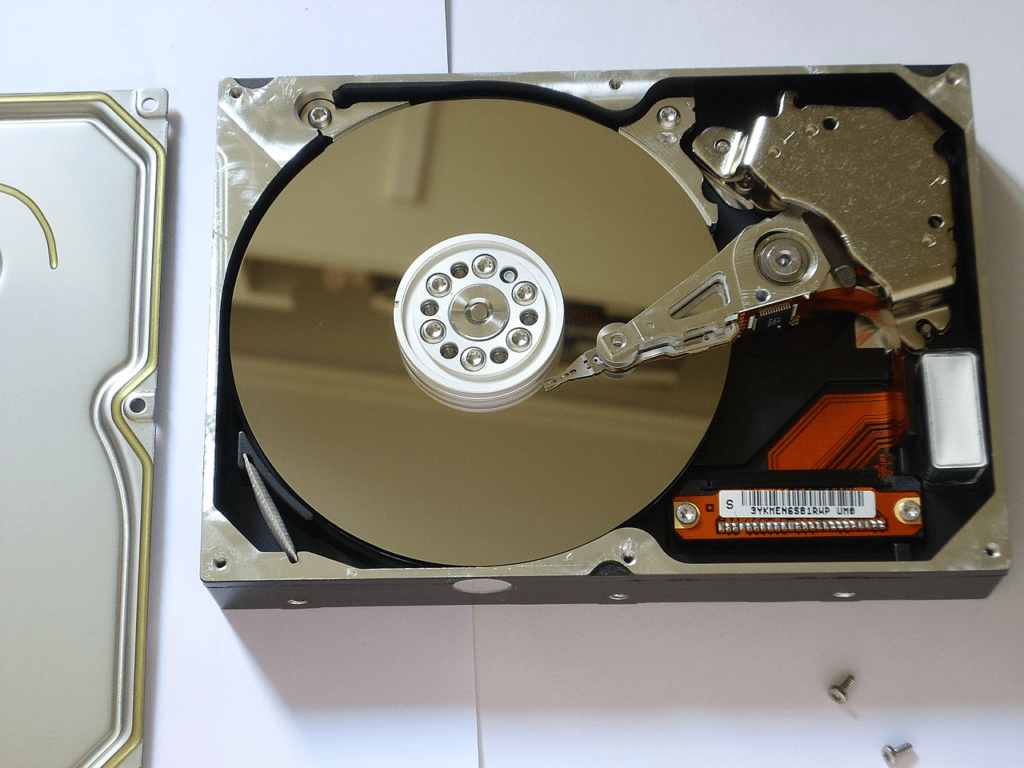

Some file systems aren’t perfect. A hard disk is a “mass storage device” meaning it is simply a container capable of holding an unimaginable amount of binary bits. Hard drives contain metal spinning discs called platters which physically contain the data.

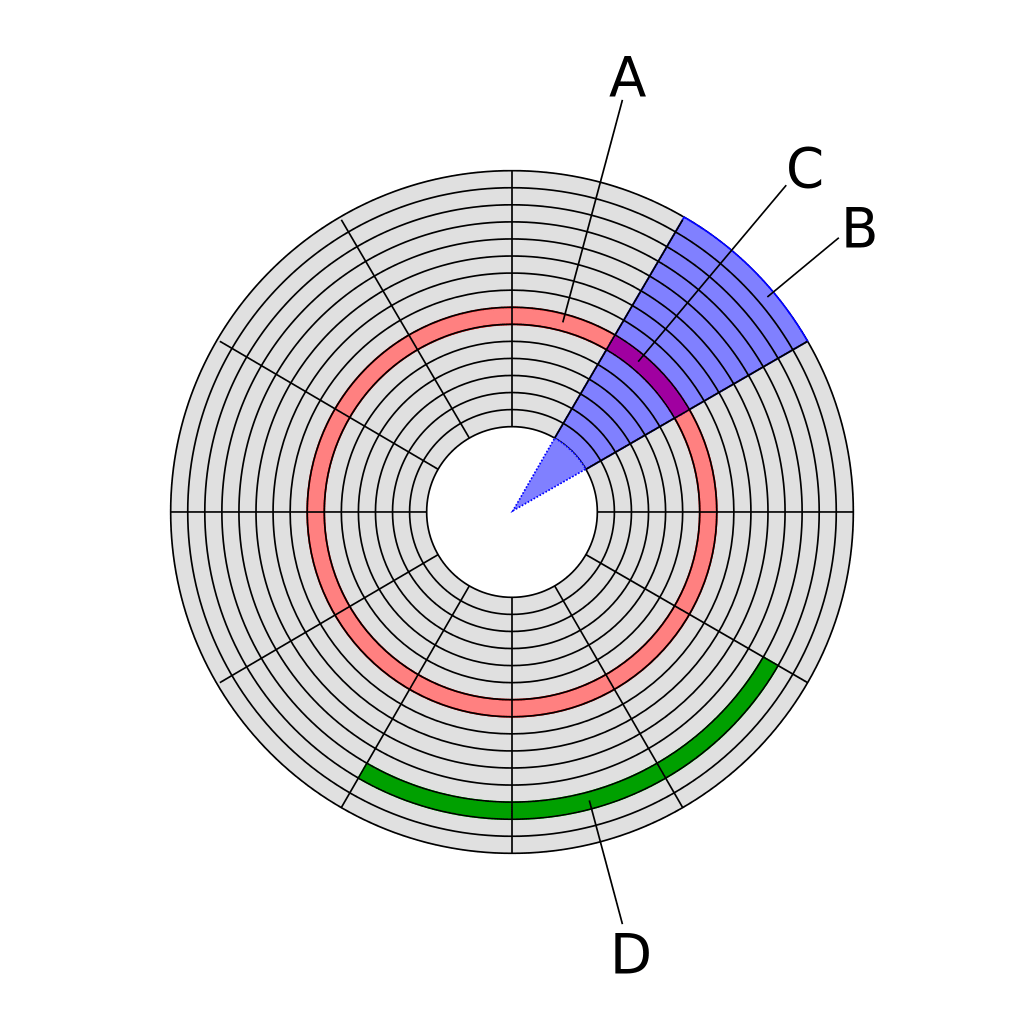

Platters are divided up using a system of tracks – these are defined circles around the disc, just as you would find on a running track, for example. Each track is then further split into segments called “sectors”

A sector can hold a fixed amount of data, usually around 512 bytes, and these sectors can be grouped together to form “clusters” which are usually around 4kb in size. This is important to understand because it explains an important property of hard drive based storage.

When data is read or written to a hard drive it is done so one cluster at a time, or 4kb at a time. This means if a file to be saved is less than 4kb, it will still take up an entire cluster. For clarity, if you save a 1kb file on a hard drive, it will take up 4kb regardless because drives will only read and write in whole clusters.

Furthermore, files do not need to be stored on a disk on consecutive tracks, or even on the same platter. Files are broken up into 4kb cluster chunks and are stored wherever there is free space on the disk. This means a single file may be stored in a scattered arrangement across a disk.

When reading data, the hard drive controller must move the head to the correct track, wait for the correct sector to appear and then read the cluster of data. If data is stored in consecutive sectors and tracks, one after the other, then this can happen very quickly. However, if data is scattered, then the head will need to continuously move, wait for sectors and repeat this process until the data is read or written. This results in significant slow downs.

File systems will try to store each segment of a file in the first available space on the disk which is big enough to hold it. This is nearly always an imperfect fit – files grow or shrink, get deleted, duplicated or moved. Initially, files may well be stored in continuous tracks and sectors, but as these changes happen “holes” begin to appear in storage that are not big enough to hold new files or data.

Eventually this inefficiency ends up slowing the system down as the hard drive physically has to move further to read the data for each file it is reading or writing. The more broken up or “fragmented” a file is, the more is slows the system down.

Defragmentation utilities will examine the structure of files on the hard disk and then do two things:

- Move files so that they are stored continuously on tracks/sectors. In other words files are put back together in to one piece on the disk

- Move files to the start of the disk so that they can be read more quickly. The heads need to move a shorter distance to read files at the start of the disk.

This greatly speeds up disc read/write times and makes the system perform better. Defragmentation is becoming less necessary as hard disks are being used more for long term data storage which results in much reduced movement of data than operating system and working storage which very quickly becomes fragmented in daily use.

Compression Software

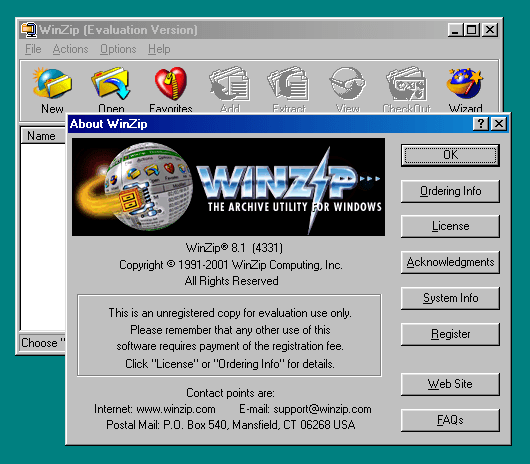

Compression utilities reduce the size of files. Compression utilities take a single file or a collection of files and produce an “archive” that should be much smaller than the original collection of files. This is extremely convenient as not only do you save storage space, but a huge collection of potentially thousands of files can be handled as one, single file alone. Imagine you wish to send a collection of files to someone via email, putting them in an archive would make this much easier as you only have one, smaller file to send.

Compression is performed when a file is saved, to reduce the amount of data stored on disk. This can be really useful when the file is due to be sent (think email, streaming or sending your mate a Snapchat) – the less data you need to send the quicker (and potentially cheaper if you’re on a data plan) it will be and the less space it will take up on your device (think how annoying it is when your phone storage is full). Compression comes in two flavours – lossy and lossless but a compression tool such as Winzip, 7-Zip or RAR will always use lossless compression methods.

There are numerous compression utilities such as 7-Zip, RAR, Zip, TAR and so forth, all of which will use different algorithms to reduce the amount of data. File compression is so commonplace that basic ZIP support is built into nearly all modern operating systems which removes the need for users to install specific software utilities to perform basic compression or decompression tasks.